1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

64

65

66

67

68

69

70

71

72

73

74

75

76

77

78

79

80

81

82

83

84

85

86

87

88

89

90

91

92

93

94

95

96

97

98

99

100

101

102

103

104

105

106

107

108

109

110

111

112

113

114

115

116

117

118

119

120

121

122

123

124

125

126

127

128

129

130

131

132

133

134

135

136

137

138

139

140

141

142

143

144

145

146

147

148

149

150

151

152

153

154

155

156

157

158

159

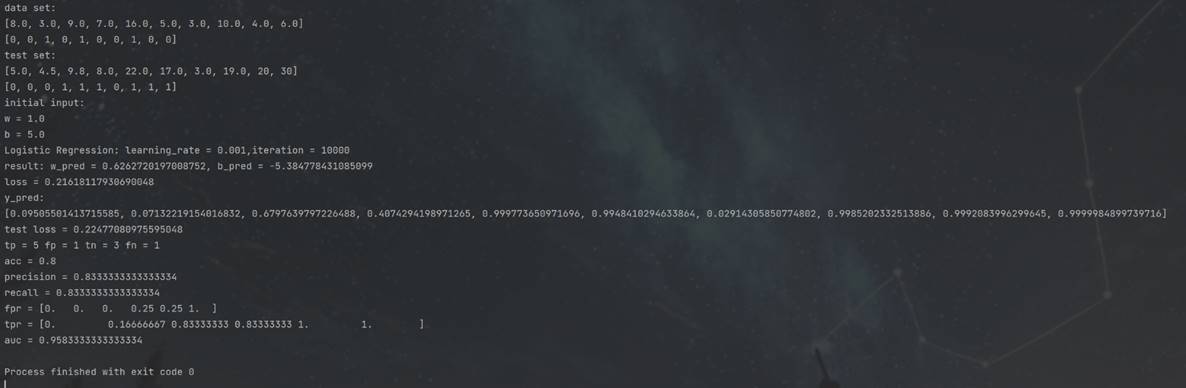

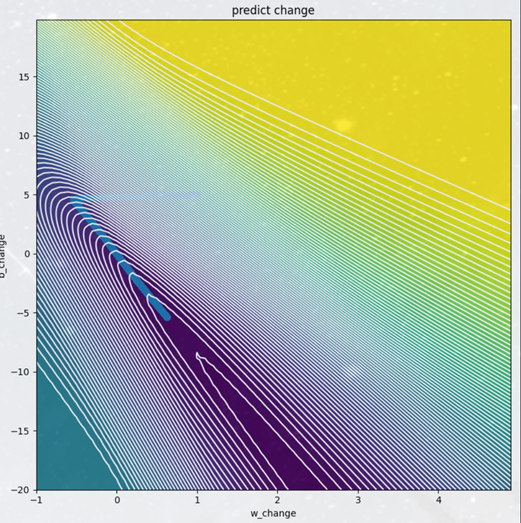

| import matplotlib.pyplot as plt

import numpy as np

from sklearn.metrics import roc_curve, auc

xdata = [8., 3., 9., 7., 16., 05., 3., 10., 4., 6.]

ydata = [0, 0, 1, 0, 1, 0, 0, 1, 0, 0]

xtest = [5., 4.5, 9.8, 8., 22., 17., 3., 19., 20, 30]

ytest = [0, 0, 0, 1, 1, 1, 0, 1, 1, 1]

plt.cla()

w_init = 1.

b_init = 5.

w_change = [w_init]

b_change = [b_init]

def sigmoid(x):

return 1. / (1. + np.exp(-x))

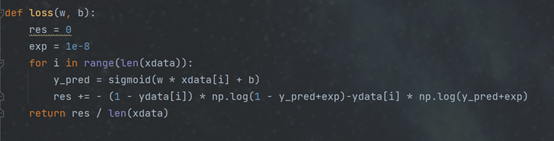

def loss(w, b):

res = 0

exp = 1e-8

for i in range(len(xdata)):

y_pred = sigmoid(w * xdata[i] + b)

res += - (1 - ydata[i]) * np.log(1 - y_pred+exp)-ydata[i] * np.log(y_pred+exp)

return res / len(xdata)

def grad(w, b):

w_grad = 0

b_grad = 0

for i in range(len(xdata)):

y_pred = sigmoid(w * xdata[i] + b)

w_grad += -(ydata[i] - y_pred) * xdata[i]

b_grad += -(ydata[i] - y_pred)

return w_grad, b_grad

def logistic_regression(w_pred, b_pred, lr=0.001, iter=10000):

for i in range(iter):

gradf = grad(w=w_pred, b=b_pred)

w_pred = float(w_pred - learning_rate * gradf[0])

b_pred = float(b_pred - learning_rate * gradf[1])

w_change.append(w_pred)

b_change.append(b_pred)

return w_pred, b_pred

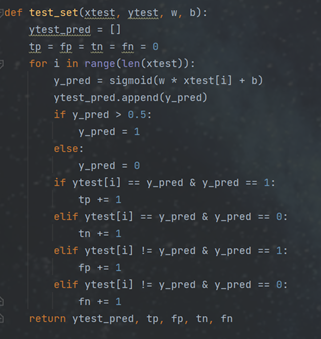

def test_set(xtest, ytest, w, b):

ytest_pred = []

tp = fp = tn = fn = 0

for i in range(len(xtest)):

y_pred = sigmoid(w * xtest[i] + b)

ytest_pred.append(y_pred)

if y_pred > 0.5:

y_pred = 1

else:

y_pred = 0

if ytest[i] == y_pred & y_pred == 1:

tp += 1

elif ytest[i] == y_pred & y_pred == 0:

tn += 1

elif ytest[i] != y_pred & y_pred == 1:

fp += 1

elif ytest[i] != y_pred & y_pred == 0:

fn += 1

return ytest_pred, tp, fp, tn, fn

def test_loss(w, b):

res = 0

exp = 1e-8

for i in range(len(xtest)):

y_pred = sigmoid(w * xtest[i] + b)

res += - (1 - ytest[i]) * np.log(1 - y_pred+exp)-ytest[i] * np.log(y_pred+exp)

return res / len(xtest)

print("data set:")

print(xdata)

print(ydata)

plt.figure(1)

plt.scatter(xdata, ydata,label="training set")

plt.title("output")

plt.xlabel("x")

plt.ylabel("y")

print("test set:")

print(xtest)

print(ytest)

plt.scatter(xtest, ytest, marker='v',label="test set")

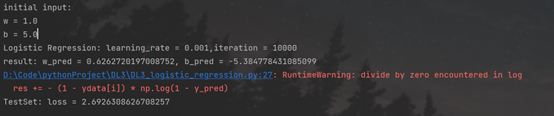

print("initial input:")

print("w = " + str(w_init))

print("b = " + str(b_init))

learning_rate = 0.001

iter = 10000

print("Logistic Regression: learning_rate = " + str(learning_rate) + ",iteration = " + str(iter))

w_pred, b_pred = logistic_regression(w_pred=w_init, b_pred=b_init, lr=learning_rate, iter=iter)

print("result: w_pred = " + str(w_pred) + ", b_pred = " + str(b_pred))

print("loss = "+str(loss(w_pred,b_pred)))

x = np.arange(0, 40, 0.1)

y = sigmoid(w_pred * x + b_pred)

plt.plot(x, y)

plt.legend()

plt.show()

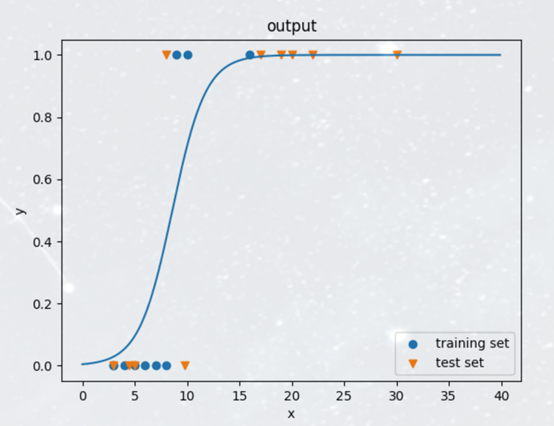

plt.figure(2)

ytest_pred, tp, fp, tn, fn = test_set(xtest, ytest, w_pred, b_pred)

acc = float(tp + tn) / float(len(xtest))

precision = float(tp) / float(tp + fp)

recall = float(tp) / float(tp + fn)

print("y_pred:")

print(ytest_pred)

print("test loss = "+str(test_loss(w_pred,b_pred)))

print("tp = "+str(tp)+" fp = "+str(fp)+" tn = "+str(tn)+" fn = "+str(fn))

print("acc = " + str(acc))

print("precision = " + str(precision))

print("recall = " + str(recall))

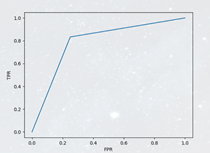

fpr, tpr, thresholds = roc_curve(ytest, ytest_pred,pos_label=1)

print("fpr = "+str(fpr))

print("tpr = "+str(tpr))

roc_auc = auc(fpr, tpr)

print("auc = "+str(roc_auc))

plt.plot(fpr, tpr, 'k--', label='ROC (area = {0:.2f})'.format(roc_auc), lw=2)

plt.xlabel("FPR")

plt.ylabel("TPR")

plt.show()

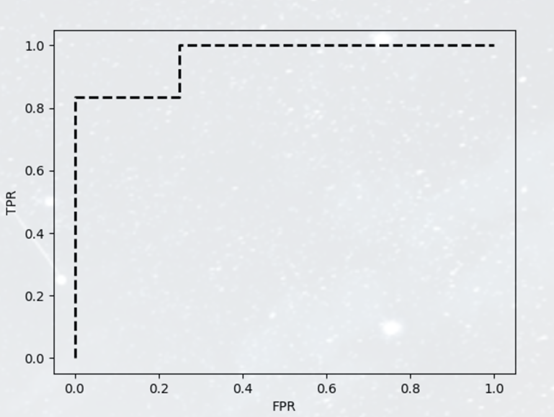

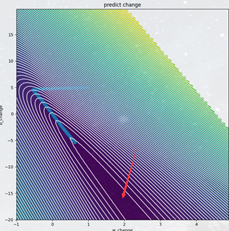

plt.figure(3)

x = np.arange(-1, 5, 0.1)

y = np.arange(-20, 20, 0.2)

X, Y = np.meshgrid(x, y)

Z = loss(X, Y)

fig, ax = plt.subplots(figsize=(8, 8), dpi=100)

CS = ax.contourf(X, Y, Z, 100)

CS = ax.contour(X, Y, Z, 100, colors='white')

plt.scatter(w_change, b_change)

plt.title("predict change")

plt.xlabel("w_change")

plt.ylabel("b_change")

plt.tight_layout()

plt.show()

|

roc曲线问题

roc曲线问题

修改后的函数

修改后的函数